AI Accounting Benchmark: New OpenAI GPT-5.4 Model Tops 19 AI Systems in Real Accounting Workflow Test

.jpg)

Woosung Chun is the CFO of DualEntry with experience in corporate finance, accounting, strategy, and acquisitions. He previously grew from scratch and led the M&A and Finance teams at Benitago, where he completed more than 12 acquisitions in 2 years. He graduated with a BS from NYU Stern. At DualEntry, Woosung writes about AI in accounting, revenue recognition, foreign currency accounting, hedge accounting, and ERP modernization for finance teams navigating complex, multi-entity environments.

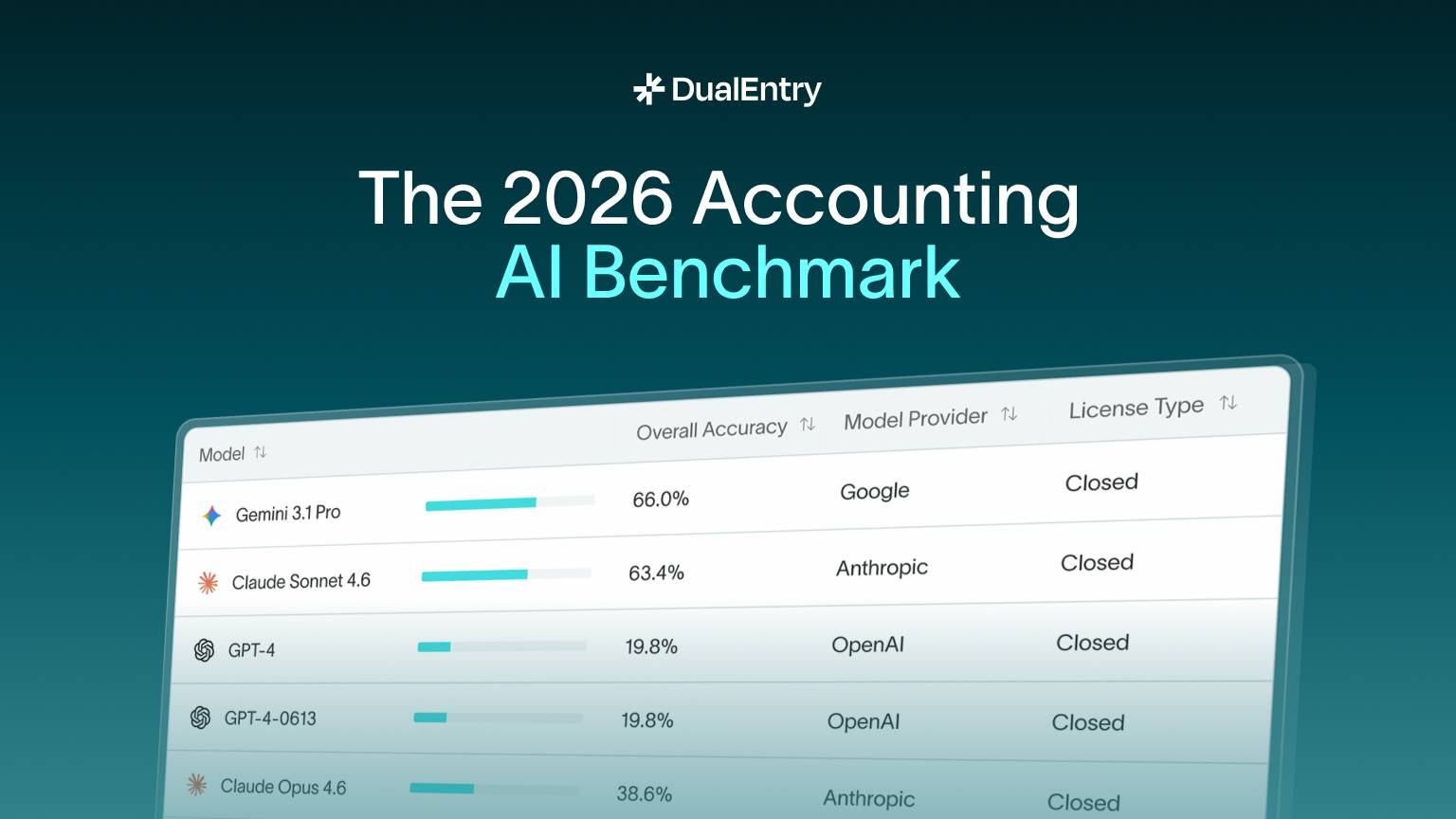

Independent benchmark across 101 accounting tasks finds leading AI models still struggle to reach enterprise-grade financial accuracy

NEW YORK — March 2026

DualEntry today released the results of a large-scale benchmark evaluating how modern AI models perform across real accounting workflows. The benchmark tested 19 leading AI models on 101 domain-specific accounting tasks, covering transaction classification, journal entry creation, bank reconciliation, financial reporting, and month-end close operations.

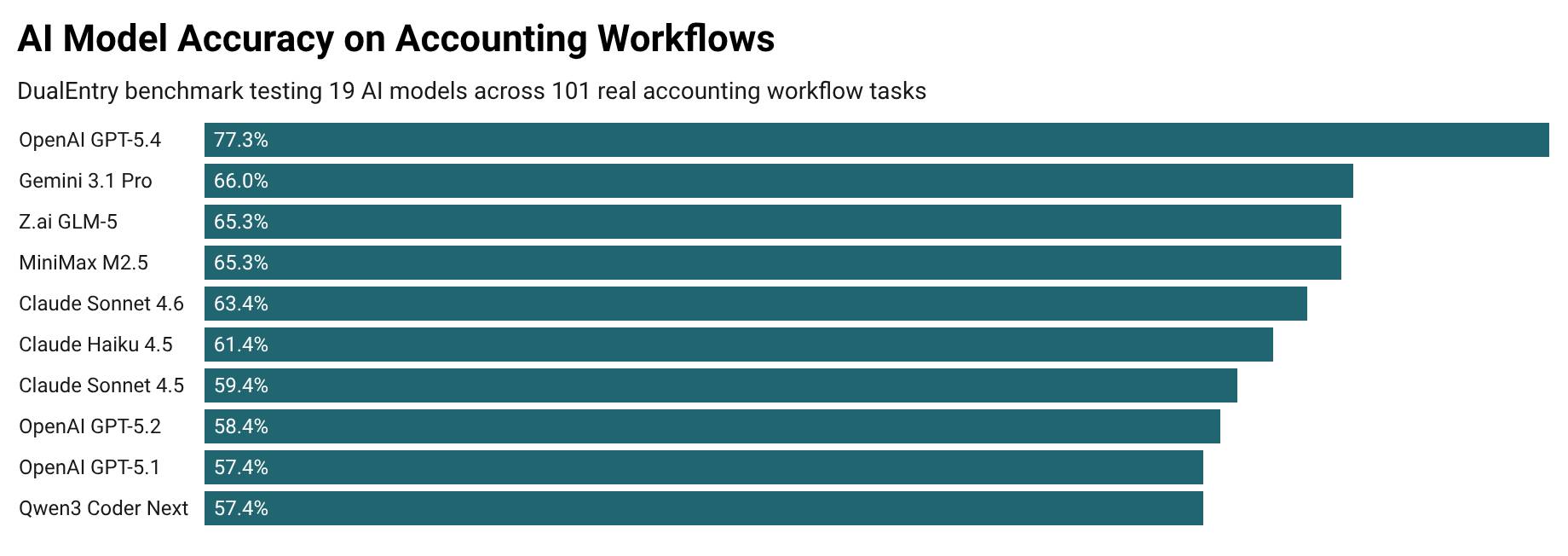

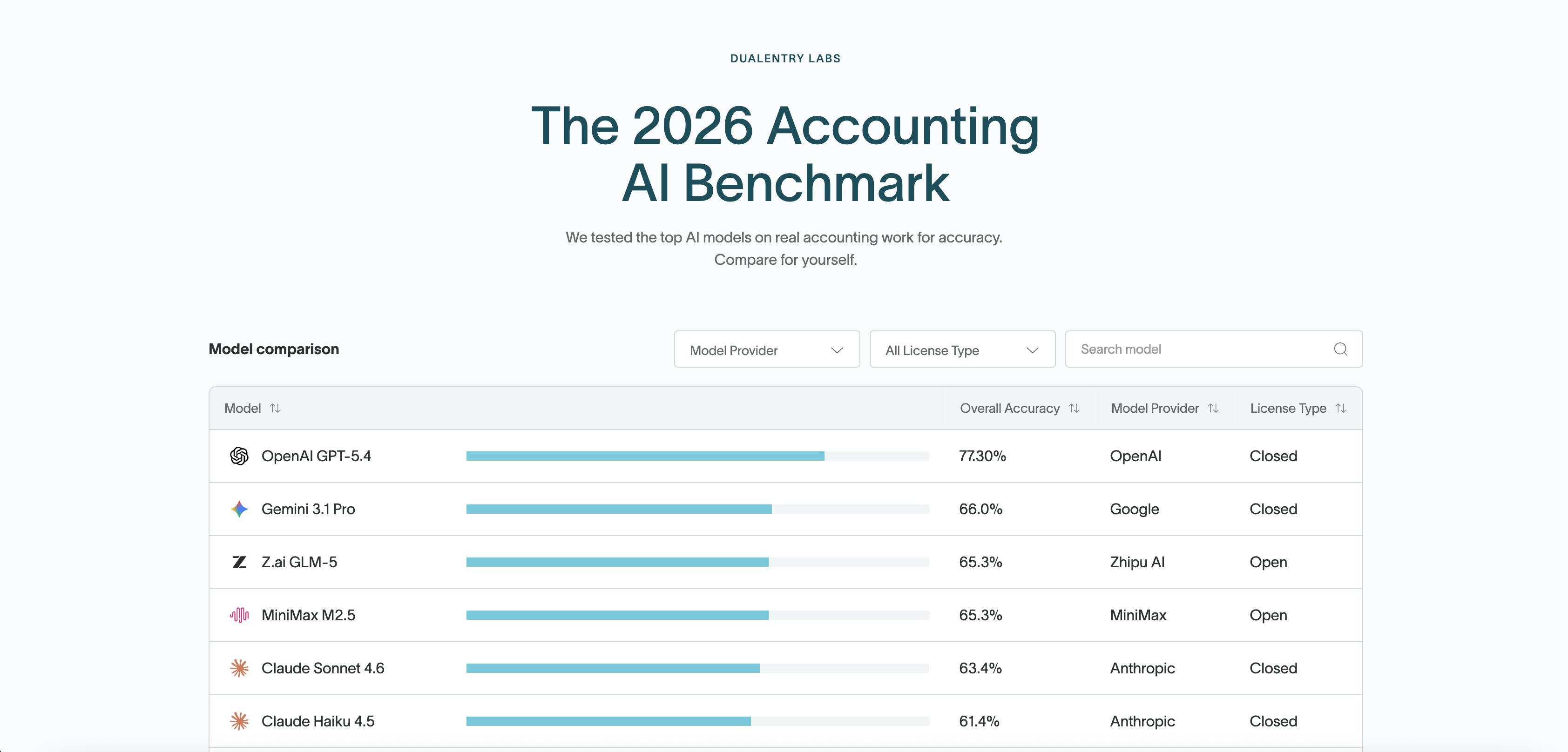

The newly released OpenAI GPT-5.4 model achieved the highest overall accuracy at 77.3%, significantly outperforming other models tested in the benchmark.

Despite rapid improvements in reasoning models, the results highlight ongoing reliability gaps in financial automation: no model exceeded 80% accuracy, and most systems failed more than one-third of accounting tasks.

Benchmark Overview

We evaluated 19 leading AI models across 101 real accounting workflows, including journal entries, reconciliation, and transaction classification tasks.

- Models tested: 19

- Accounting tasks: 101 real workflows

- Top score: GPT-5.4 — 77.3% accuracy

- Failure rate: ~23% of tasks

Key Findings

- OpenAI GPT-5.4 achieved the highest accuracy at 77.3%.

- The second-best model, Gemini 3.1 Pro, scored 66%, more than 11 percentage points behind GPT-5.4.

- Most models scored below 65% accuracy across accounting workflows.

- Older models such as GPT-4 scored only 19.8% on the same task set.

- Even the best performing model still fails roughly 1 in 4 accounting tasks.

“Even the best model still fails about one in four accounting tasks. That’s why AI needs workflow guardrails before it can run financial operations autonomously.”

— Santiago Nestares, Cofounder, DualEntry

AI Model Accuracy on Accounting Workflows

Accuracy of leading AI models across 101 accounting workflow tasks in the DualEntry benchmark.

AI Accounting Benchmark Leaderboard

Full Model Comparison

Models That Struggled Most

The results illustrate the rapid evolution of reasoning models, with newer models significantly outperforming earlier generations.

What This Means for Enterprise AI

Large language models are increasingly capable at generating structured text, categorizing transactions, and drafting journal entries. These capabilities can accelerate repetitive accounting tasks such as first-pass transaction classification and draft financial reporting.

However, accounting systems do not run on drafts.

Financial operations depend on validated records, entries that balance, reconciliations that resolve to zero, and reports that withstand audit scrutiny. The distance between a plausible draft and a validated record is where operational risk emerges.

“Large language models are powerful drafting tools, but finance doesn’t run on drafts; it runs on validated records,” said Santiago Nestares, co-founder of DualEntry. “The benchmark shows that AI can accelerate accounting workflows, but without system-level controls and validation, errors can quickly cascade through financial reporting.”

Benchmark Methodology

The benchmark was designed as a task-oriented evaluation of real accounting workflows, rather than trivia-style knowledge questions.

A total of 101 accounting tasks were constructed using a provisioned chart of accounts and minimal context designed to simulate real operational environments.

Tasks were divided into eight workflow categories.

Each model was tested in an isolated environment with no connection to external financial systems.

All responses were graded using deterministic binary scoring, correct or incorrect, with no partial credit or subjective interpretation.

Multiple runs per model were permitted to compute overall accuracy and difficulty tiers.

Tested AI Providers

The benchmark evaluated models from leading AI developers including:

- OpenAI

- Anthropic

- Alibaba

- Zhipu AI

- MiniMax

- Moonshot AI

- NVIDIA

A total of 19 models were evaluated.

Full Benchmark Results

Full benchmark results and model comparisons are available here:

https://www.dualentry.com/accounting-ai-benchmark

About DualEntry

DualEntry is an AI-native ERP platform designed for companies scaling from mid-market to IPO. The platform embeds AI directly into financial workflows including journal entry drafting, reconciliation, and reporting while maintaining the validation controls required for enterprise accounting.

Media Contact

Ana Marturet

anam@dualentry.com

Website: https://www.dualentry.com/